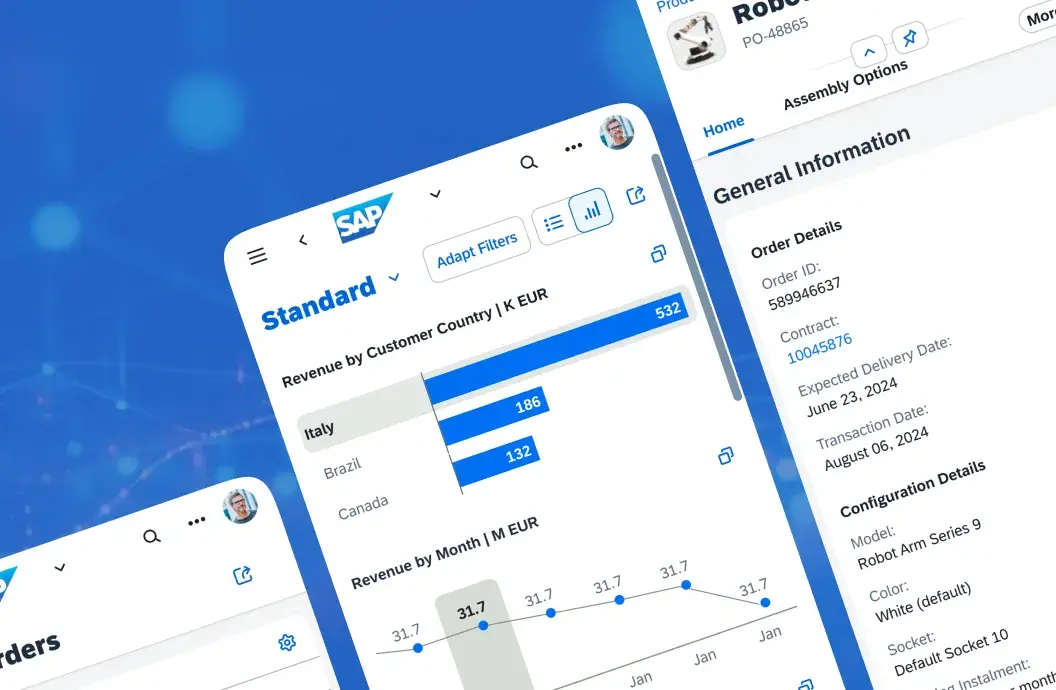

Learn about the proven approaches to integrating SAP with Snowflake and turning operational ERP data into AI-ready analytics.

How to Integrate SAP Ariba with SAP ERP and SAP S/4HANA

Moving SAP data into Snowflake looks simple on paper. The data exists. Snowflake is ready. Yet many teams pause before the first production run. The hesitation is not about tools. It is about risk. What happens to the SAP system when data starts flowing every few seconds? Will reports still match finance postings? Will latency grow once real business volume hits?

If you are responsible for SAP data and analytics, these questions are familiar. You may already run batch extracts and feel the delay. You may be testing near-real-time access and worrying about load on the application server. Maybe you are unsure which SAP mechanisms are safe to use and which ones quietly create problems later. This uncertainty often stops projects that otherwise make technical sense.

This article walks through how SAP data can be streamed into Snowflake without guesswork. It explains the main architectural options, how real-time data movement actually works inside SAP, and where teams usually run into trouble. Keep reading to learn what to check before moving SAP data at speed, and how to design a setup that holds up in production.

Why SAP to Snowflake? Shortening the Data-to-Insight Loop

Enterprise decisions increasingly depend on how quickly operational data becomes usable for analysis. SAP records transactions in real time, but most SAP landscapes still expose that data in delayed batches. Reports arrive hours later. Forecasts rely on yesterday’s numbers. This gap slows decision-making, even when the underlying systems are stable.

Many U.S. enterprises address this gap through dedicated integration architectures rather than point-to-point extracts. SAP integration is not a one-off technical task. It is a design discipline that balances data freshness, system load, and business logic. This is where structured SAP integration approaches become critical, especially when Snowflake is the analytics platform of choice.

Once SAP data is available outside the transactional system, Snowflake changes the economics of analytics. It separates compute from storage and scales independently of SAP workloads.

This allows teams to run analytical queries, data science workloads, and model training without competing with SAP users for system resources. The value is predictability under real business volume.

Why are enterprises moving toward continuous intelligence?

Batch data loads once worked because decisions moved slowly. That is no longer the case. Pricing changes, supply disruptions, and demand shifts now happen during the business day, not overnight. When SAP data reaches analytics platforms hours later, teams react late, even if their reports look correct.

Near-real-time access changes how SAP data is used. It allows analytics to follow operational events closely, without turning the SAP system into an analytics engine. Snowflake plays a key role here, but the reason goes beyond speed.

AI/ML bottleneck

SAP’s cloud-native analytics tools (such as SAC) handle operational reporting well. They do not provide the elastic compute or scale needed to train large models, run complex predictive simulations, or process cross-functional datasets. Snowflake allows data scientists to run these workloads without impacting SAP production systems.

Single source of truth

Enterprise decisions require combining SAP ERP data with external sources, such as CRM (Salesforce), marketing platforms (Adobe), and market telemetry. Snowflake provides a unified environment for cross-functional analysis, reducing the risk of inconsistencies between systems and enabling faster insights.

Data democratization

Making SAP data available in Snowflake lets analysts and data scientists use familiar tools like SQL, Python, or R. Teams no longer need ABAP expertise to explore and model data, which accelerates innovation while reducing operational bottlenecks.

Integrating SAP data effectively requires more than replication. The business meaning of SAP data resides in application logic, CDS views, and metadata. Simply moving raw tables like MARA (Material Master, which stores product information) or KNA1 (Customer Master, which stores customer details) produces a “data swamp” that is difficult to interpret. Real-time pipelines must preserve semantics first, then optimize for throughput and latency.

If your goal is controlled, reliable SAP integration for analytics or AI, we can help you get there. Start the conversation today.

Three Practical Ways to Stream SAP Data into Snowflake

The speed and reliability of SAP data delivery to Snowflake depend on the pipeline architecture. This architecture defines three concrete parameters:

- How quickly a change in SAP becomes visible in Snowflake

- How much data can be transferred during peak hours

- How much load is added to the SAP application server

Every real-time setup is a trade-off between these factors. A design that minimizes latency may increase system load. A design that protects SAP performance may accept delayed updates. The task is not to eliminate trade-offs, but to choose the one that fits the business use case.

Below are three integration approaches commonly used in U.S. enterprise SAP landscapes. Each approach addresses latency, throughput, and system load in a different way.

1. SAP SLT replication with direct Snowflake loading

SAP Landscape Transformation Replication Server captures data changes via trigger-based logging. It detects inserts, updates, and deletes using database triggers. Each change is transferred as a replication event. This allows SAP data to reach Snowflake within seconds.

This approach supports large tables and continuous change volumes. Finance documents, inventory movements, and production confirmations are typical examples. SLT allows teams to define filters, table mappings, and replication rules. These controls help limit the scope of replicated data and stabilize throughput under load.

SLT introduces additional system requirements and must be planned carefully before deployment. It requires a dedicated SLT server, initial sizing based on data volume and change frequency, and continuous SAP performance monitoring, since the trigger-based mechanism adds load to the database and application stack, especially during peak posting periods. SLT is often chosen when teams need near-real-time replication but are ready to proactively monitor and manage the ERP impact.

Best suited for: mission-critical finance and supply chain data with strict latency requirements.

2. Application-layer extraction using ODP and OData

Operational Data Provisioning exposes SAP data through standard extractors and services at the application layer. Data can be accessed through ODP-enabled sources — often via OData services — and then processed by cloud-native tools such as AWS Glue, Azure Data Factory, or Google Cloud Dataflow.

Unlike SLT, ODP via OData or application-layer extractors avoids database triggers or shadow tables. (ODP can also operate via SLT, which relies on triggers, but the OData/extractor approach remains fully “Clean Core” compliant.)

This method respects SAP application logic more naturally. It works well with CDS views and predefined extractors, which help preserve business meaning. It also aligns with Clean Core principles, since it avoids database-level changes and custom triggers when implemented via OData or standard extractors.

The cost of this approach is slightly higher latency and application server load. ODP-based extraction usually runs in micro-batches rather than true streaming mode. It also consumes application server resources, which limits how frequently data can be pulled without affecting users.

Best suited for: organizations that prioritize SAP-standard patterns and accept minute-level latency.

3. External CDC platforms using log-based capture

Third-party CDC platforms capture changes directly from database logs rather than SAP application processes. This reduces load on the SAP system and simplifies setup. Many of these tools offer prebuilt connectors and managed pipelines into Snowflake.

This approach works well in environments where Snowflake is one of several data targets. It is best suited for non-SAP-centric architectures, where SAP is one of multiple sources rather than the primary system of record. It also fits teams that want faster time-to-value without deep SAP configuration. However, these tools often extract data at a technical level. Business logic, time validity, and calculation rules must be rebuilt downstream.

Careful modeling is required to avoid producing fast but misleading datasets. Without this step, analytics teams may spend more time validating numbers than using them.

Best suited for: multi-cloud landscapes and teams optimizing for low SAP impact.

Comparing the approaches

The table below summarizes how these methods compare across common decision criteria.

|

Feature |

SAP SLT |

ODP / OData |

Third-party CDC |

|

Data latency |

Near-real-time (seconds) |

Micro-batch (minutes) |

Near-real-time (log-based) |

|

Throughput |

High |

Medium |

High |

|

SAP system load |

Moderate |

High (application layer) |

Low |

|

Setup effort |

High |

Medium |

Low |

|

Cloud alignment (AWS / Azure / GCP) |

Hybrid |

Strong |

Strong |

Choosing between these options is less about preference and more about constraints. In the next section, we look closer at why SAP data cannot be treated like a standard database feed, and where most real-time projects break down once they reach production.

Unsure which real-time integration approach will work best? We help enterprises evaluate methods and implement them safely.

How to Handle SAP Complexity Beyond Raw Tables

Integrating SAP data into Snowflake is more than moving tables. Successful pipelines avoid common pitfalls that block analytics or break business logic. Understanding SAP’s data structures and application logic is essential to keeping data usable once it lands in Snowflake.

Real-time replication without context can create fragmented, confusing datasets. To prevent this, teams need to decode SAP structures and preserve the meaning embedded in application logic.

Decoding SAP data structures

SAP stores critical information in proprietary Pool and Cluster tables. In modern SAP S/4HANA systems, these tables are technically accessible, but extracting data directly can bypass the application logic, hierarchies, and security filters embedded in SAP. Without careful extraction, important entities like Material Master or Customer Master arrive in Snowflake as disconnected fragments.

LeverX uses ABAP Core Data Services (CDS) Views to expose SAP data as logically joined, business-ready datasets. CDS Views provide a structured layer that translates SAP tables into readable entities while maintaining relationships, hierarchies, and metadata. This approach ensures that Snowflake receives consistent, interpretable data, not a “data swamp” of isolated tables.

Preserving and recreating application logic

Moving data alone solves only part of the integration challenge. SAP embeds business rules and transformation logic in its applications. Simple replication of tables does not preserve calculations, validations, or conditional rules.

To keep data “business-ready” in Snowflake, LeverX recreates key SAP logic using tools like dbt (data build tool) or Coalesce. This ensures that replicated data behaves as it would inside SAP, supporting accurate reporting, analytics, and AI/ML models without back-and-forth validation in the ERP system.

What Is Changing for SAP and Snowflake in U.S. Enterprises

In the U.S. market, SAP-to-Snowflake integration has shifted from an analytics task to infrastructure planning. The focus is no longer limited to reporting speed. It now includes how data supports automated decisions, how much data is physically moved, and how security rules are enforced across systems.

Many U.S. enterprises describe this shift as moving toward an agentic setup. In practice, this means analytics and models react to SAP events as they happen. A sales order, a stock change, or a payment posting can trigger forecasts, alerts, or automated actions without manual intervention. This only works when SAP data is available quickly, governed correctly, and structured for reuse.

Three priorities shape how U.S. companies design these pipelines today.

- AI readiness tied to operational data: Models need direct access to current SAP data to produce usable results.

- Reduced data movement: Copying large SAP datasets increases risk and cost.

- Clear cost control across hybrid cloud setups: Data transfer and compute usage must be predictable and auditable.

The table below summarizes how these priorities translate into technical choices.

Strategic Priorities in U.S. SAP-Snowflake Architectures

|

Strategic priority |

Technical focus |

U.S. compliance and technology context |

|

AI readiness |

Using Snowflake Snowpark and Cortex with SAP-derived datasets |

SAP CDS views are used to deliver finance and supply chain data to models, reducing incorrect predictions caused by stale or partial inputs |

|

Data minimization |

Query-first and zero-copy patterns |

SEC cybersecurity guidance pushes teams to limit data duplication and reduce exposure of sensitive records |

|

Hybrid cloud cost control |

Private connectivity and in-platform processing |

SOC2 and HIPAA requirements drive the use of AWS and Azure Private Link, and they discourage public data transfer paths |

These priorities explain why older integration patterns are being replaced.

How SAP data architectures are shifting in North America

Regulatory pressure, cloud adoption, and data volume make the U.S. market distinct. Enterprises operate across multiple clouds while facing strict disclosure and audit requirements. This combination has accelerated changes in how SAP data is handled.

Several patterns are becoming standard.

- Batch ETL is being phased out.

Nightly extracts delay decisions and increase system load during fixed windows. Many teams now rely on Change Data Capture and event-based pipelines. The goal is simple. A business event in SAP should appear in Snowflake within seconds, not hours. - Governance is applied before analytics.

New SEC cybersecurity rules push organizations to prove how sensitive data is handled. U.S. companies increasingly apply masking and access rules before SAP data reaches notebooks or dashboards. Snowflake governance tools are often extended to SAP-fed datasets so that personal or regulated fields are restricted by default. - Transformation logic moves into Snowflake.

Third-party ETL platforms add cost and data movement. As a result, many teams now run transformation code directly in Snowflake using Snowpark. Python or SQL logic replaces ABAP-based post-processing, reducing outbound data volume and simplifying audit trails.

This shift also affects compliance-sensitive use cases. For organizations subject to CCPA or HIPAA, joining SAP customer data with external datasets requires strict control. Snowflake Data Clean Rooms are increasingly used for this purpose. They allow cross-dataset analysis without exposing raw personal records, which supports analytics while meeting regulatory requirements.

What Are the Real Costs of Moving SAP Data to Snowflake?

Real-time SAP to Snowflake integration improves data availability, but it also introduces costs that are easy to underestimate. These costs are not always visible during initial design workshops. They surface later, when pipelines run continuously under real business load.

For U.S. enterprises, cost control starts with understanding where money and risk accumulate. Licensing, cloud consumption, and SAP system load all need attention before real-time replication moves into production.

SAP licensing and runtime constraints

Not every SAP landscape is licensed for unrestricted third-party data extraction. Some scenarios require specific runtime or extraction licenses, such as Open Hub or ODP-related usage. Ignoring these constraints can lead to compliance issues during audits.

Before enabling real-time pipelines, enterprises should confirm that their SAP licensing model permits continuous data extraction. This step avoids remediation costs and unplanned contract discussions later.

Snowflake credit consumption in real time

Near-real-time ingestion changes how Snowflake credits are consumed. If left unmanaged, continuous Snowpipe loads and always-on warehouses can drive costs quickly. This is especially visible when data volume spikes during peak business hours.

Cost control depends on technical configuration. Intelligent clustering, automated warehouse suspension, and workload isolation help prevent unnecessary credit usage. Real-time does not require constant computation, but it does require careful scheduling and monitoring.

Managing SAP application load

Change Data Capture adds overhead to the SAP source system. Triggers, extractors, or log readers consume resources that compete with business users. The risk becomes visible during month-end closing, inventory runs, or payroll processing.

LeverX addresses this through structured load analysis before activation. A replication risk assessment evaluates peak usage windows, data volume, and system limits. The goal is simple: keep SAP response times stable while data flows continuously to Snowflake.

Securing data transit without extra cost

Data movement between SAP and Snowflake must meet U.S. compliance requirements. Using AWS PrivateLink or Azure Private Link keeps traffic off the public internet. This reduces exposure, improves latency, and supports SOC2 and HIPAA controls.

Private connectivity also prevents hidden network egress fees that appear with public endpoints. Security and cost efficiency align when data paths are designed correctly from the start.

Want a clear picture of costs before you commit? Get an estimate based on your SAP system, data volume, and real usage patterns.

How Is SAP Data Secured in a Real-Time Snowflake Pipeline?

Any SAP-Snowflake data pipeline must be designed with compliance, auditability, and access control in mind from day one 一 otherwise, it simply won’t pass internal or regulatory review.

That’s why security is built directly into how we design and run data flows. What this looks like in practice:

- Encryption from start to finish

Data stays protected at every stage of its journey. It remains encrypted inside SAP, travels through secure TLS connections, and is stored, encrypted, in Snowflake. This ensures sensitive business and personal data is never exposed during movement or storage. - Access control that mirrors SAP logic

SAP authorization models are detailed and often complex. When data lands in Snowflake, that structure should not disappear. We align SAP permissions with Snowflake role-based access so users see only what they are allowed to see. - Clear audit trails for every data movement

U.S. regulations require traceability. Enterprises must be able to show when data was extracted, where it moved, and who accessed it. This is especially critical for SOX, HIPAA, and internal security audits. A compliant SAP to Snowflake pipeline maintains audit logs across systems. These logs provide a clear chain of custody for replicated data and support incident investigation without manual reconstruction.

How Can You Turn SAP-Snowflake Integration into a Stable Foundation?

Real-time SAP data in Snowflake can deliver measurable outcomes. But only if extraction, transformation, and monitoring are planned from the start. Early technical choices affect long-term reliability, cost, and compliance.

Teams that see results sooner tend to make a few disciplined choices early on.

Prioritize CDS views over table extraction

Direct table extraction removes business context. CDS views retain calculation logic, joins, and authorizations at the SAP layer. By extracting from CDS views, Snowflake receives data that is immediately usable for analytics, planning, and predictive models.

This reduces downstream transformation effort and ensures data alignment as SAP evolves. It also supports governance and audit requirements, keeping the pipeline traceable and auditable.

Start with a high-impact use case

Implementing a pilot in processes such as Sales & Operations Planning or inventory optimization exposes critical performance and integration requirements early. These processes involve frequent refresh cycles, cross-domain joins, and large data volumes.

If the pipeline works reliably here, it scales safely to other business domains without repeated redesign.

Make observability a core component

Data pipelines succeed when failures are detected early. Automated monitoring of freshness, volume, and schema changes prevents downstream errors. Observability reduces operational risk and provides confidence that SAP data in Snowflake is accurate and complete.

Why Teams Work With LeverX

LeverX supports SAP–Snowflake programs end-to-end. We work across:

- SAP-side configuration and data exposure

- Secure and compliant data movement

- Transformation logic implemented directly in Snowflake using modern tooling

Our role is to make sure your data foundation holds up under real business pressure, such as audits, scale, and future AI initiatives.

Is your organization planning or revisiting an SAP-Snowflake setup? A short technical discussion is often enough to identify risks, validate assumptions, and define next steps with confidence.

How useful was this article?

Thanks for your feedback!